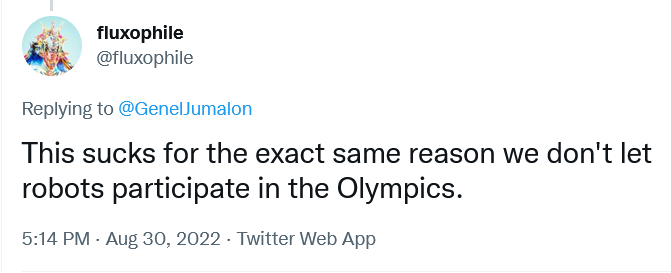

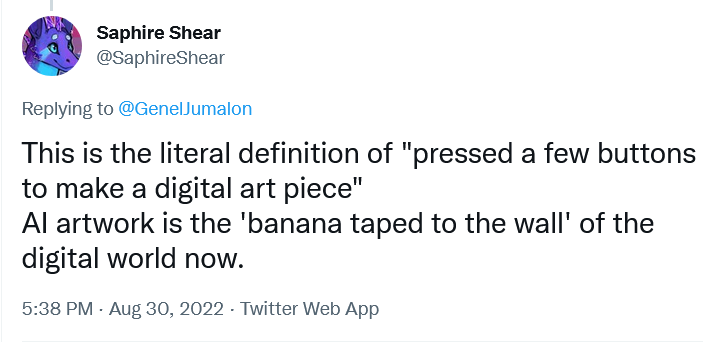

While more and more people are using AI-powered chatbots like ChatGPT, that’s not to say that people are trusting their outputs. Despite being hailed as a potential replacement for Google, Wikipedia, and a bona fide disruptor of education, a recent survey found that when it comes to information about important issues like the 2024 U.S. election, ChatGPT users overwhelmingly distrust it.

A familiar refrain in contemporary AI discourse is that while the programs that exist now have significant flaws, what’s most exciting about AI is its potential. However, for chatbots and other AI programs to play the roles in our lives that techno-optimists foresee, people will start having to trust them. Is such a thing even possible?

Addressing this question requires thinking about what it means to trust in general, and whether it is possible to trust a machine or an AI in particular. There is one sense in which it certainly does seem possible, namely the sense in which “trustworthy” means something like “reliable”: many of the machines that we rely on are, indeed, reliable, and thus ones that we at least describe as things that we trust. If chatbots fix many of their current problems – such as their propensity to fabricate information – then perhaps users would be more likely to trust them.

However, when we talk about trust we are often talking about something more robust than mere reliability. Instead, we tend to think about the kind of relationship that we have with another person, usually someone we know pretty well. One kind of trusting relationship we have with others is based on us having each others’ best interests in mind: in this sense, trust is an interpersonal relationship that exists because of familiarity, experience, and good intentions. Could we have this kind of relationship with artificial intelligence?

This perhaps depends on how artificial or intelligent we think some relevant AI is. Some are willing, even at this point, to ascribe many human or human-like characteristics to AI, including consciousness, intentionality, and understanding. There is reason to think, however, that these claims are hyperbolic. So let’s instead assume, for the sake of argument, that AI is, in fact, much closer to machine than human. Could we still trust it in a sense that goes beyond mere reliability?

One of the hallmarks of trust is that trusting leaves one open to the possibility of betrayal, where the object of our trust turns out to not have our interests in mind after all, or otherwise fails to live up to certain responsibilities. And we do often feel betrayed when machines let us down. For example, say I set my alarm clock so I can wake up early to get to the airport, but it doesn’t go off and I miss my flight. I may very well feel a sense of betrayal towards my alarm clock, and would likely never rely on it again.

However, if my sense of betrayal at my alarm clock is apt, it still does not indicate that I trust it in the sense of ascribing any kind of good will to it. Instead, we may have trusted it insofar as we have adopted what Thi Nguyen calls an “unquestioning attitude” towards it. In this sense, we trust the clock precisely because we have come to rely on it to the extent that we’ve stopped thinking about whether it’s reliable or not. Nguyen provides an illustrative example: a rock climber trusts their climbing equipment, not in the sense of thinking it has good intentions (since ropes and such are not the kinds of things that have intentions), but in the sense that they rely on it unquestioningly.

People may well one day incorporate chatbots into their lives to such a degree that they adopt unquestioning attitudes toward them. But our relationships with AI are, I think, fundamentally different from those that we have towards other machines.

Part of the reason why we form unquestioning attitudes towards pieces of technology is because they are predictable. When I trust my alarm clock to go off at the time I programmed it, I might trust in the sense that I can put it out of my mind as to whether it will do what it’s supposed to. But a reason I am able to put it out of my mind is because I have every reason to believe that it will do all and only that which I’ve told it to do. Other trusting relationships that we have towards technology work in the same way: most pieces of technology that we rely on, after all, are built to be predictable. Our sense of betrayal when technology breaks is based on it doing something surprising, namely when it does anything other than the thing that it has been programmed to do.

AI chatbots, on the other hand, are not predictable, since they can provide us with new and surprising information. In this sense, they are more akin to people: other people are unpredictable insofar as when we rely on them for information, we do not predictably know what they are going to say (otherwise we probably wouldn’t be trying to get information from them).

So it seems that we do not trust AI chatbots in the way that we trust other machines. Their inability to have positive intentions and form interpersonal relationships prevents them from being trusted in the way that we trust other people. Where does that leave us?

I think there might be one different kind of trust we could ascribe to AI chatbots. Instead of thinking about them as things that have good intentions, we might trust them precisely because they lack any intentions at all. For instance, if we find ourselves in an environment in which we think that others are consistently trying to mislead us, we might not look to someone or something that has our best interests in mind, but instead to that which simply lacks the intention to deceive us. In this sense, neutrality is the most trustworthy trait of all.

Generative AI may very well be seen as trustworthy in the sense of being a neutral voice among a sea of deceivers. Since it is not an individual agent with its own beliefs, agendas, or values, and has no good or ill intentions, if one finds oneself in an environment they think of as untrustworthy then AI chatbots may be considered a trustworthy alternative.

A recent study suggests that some people may trust chatbots in this way. It found that the strength of people’s beliefs in conspiracy theories dropped after having a conversation with an AI chatbot. While the authors of the study do not propose a single explanation as to why this happened, part of this explanation may lie in the user trusting the chatbot: since someone who believes in conspiracy theories is likely to also think that people are generally trying to mislead them, they may look to something that they perceive as neutral as being trustworthy.

While it may then be possible to trust an AI because of its perceived neutrality, it can only be as neutral as the content it draws from; no information comes from nowhere, despite its appearances. So while it may be conceptually possible to trust AI, the question of whether one should do so at any point in the future remains open.