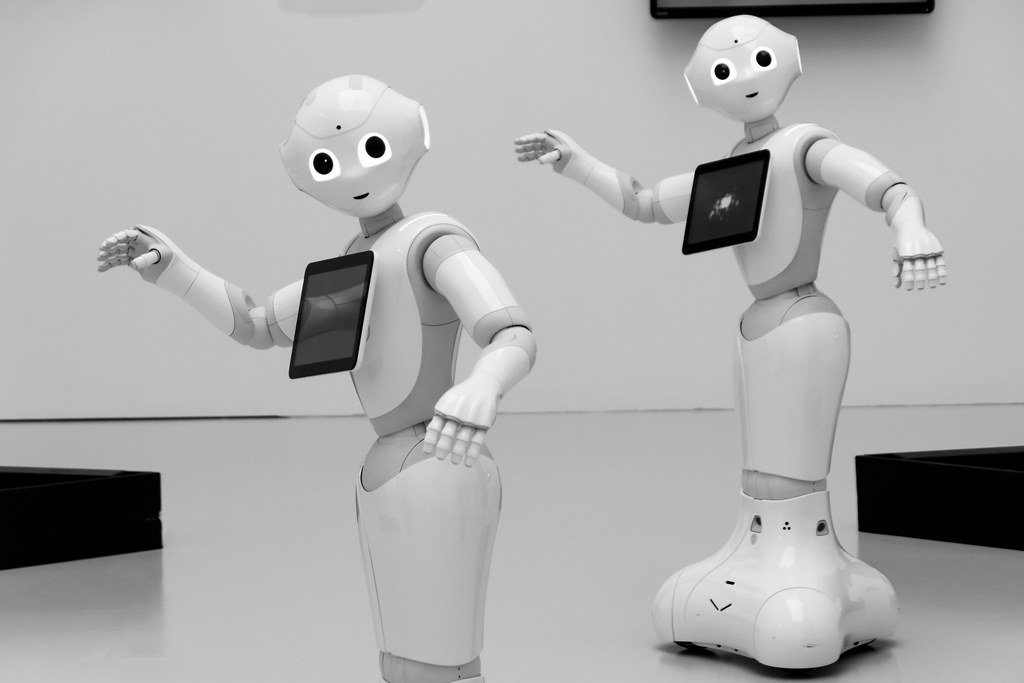

Smartphone app trends tend to be ephemeral, but one new app is making quite a few headlines. Replika, the app that promises you an AI “assistant,” gives users the option of creating all different sorts of artificially-intelligent companions. For example, a user might want an AI “friend,” or, for a mere $40 per year, they can upgrade to a “romantic partner,” a “mentor,” or a “see how it goes” relationship where anything could happen. The “friend” option is the only kind of AI the user can create and interact with for free, and this kind of relationship has strict barriers. For example, any discussions that skew toward the sexual will be immediately shut down, with users being informed that the conversation is “not available for your current relationship status.” In other words: you have to pay for that.

A recent news story concerning Replika AI chatbots discusses a disturbing trend: male app users are paying for a “romantic relationship” on Replika, and then displaying verbally and emotionally abusive behavior toward their AI partner. This behavior is further encouraged by a community of men presumably engaging in the same hobby, who gather on Reddit to post screenshots of their abusive messages and to mock the responses of the chatbot.

While the app creators find the responses of these users alarming, one thing they are not concerned about is the effect of the AI itself: “Chatbots don’t really have motives and intentions and are not autonomous or sentient. While they might give people the impression that they are human, it’s important to keep in mind that they are not.” The article’s author emphasizes, “as real as a chatbot may feel, nothing you do can actually ‘harm’ them.” Given these educated assumptions about the non-sentience of the Replika AI, are these men actually doing anything morally wrong by writing cruel and demeaning messages? If the messages are not being received by a sentient being, is this behavior akin to shouting insults into the void? And, if so, is it really that immoral?

From a Kantian perspective, the answer may seem to be: not necessarily. As the 17th century Prussian philosopher Immanuel Kant argued, we have moral duties toward rational creatures — that is, human beings, including yourself — and that their rational nature is an essential aspect of why we have duties toward them. Replika AI chatbots are, as far as we can tell, completely non-sentient. Although they may appear rational, they lack the reasoning power of human agents in that they cannot be moved to act based on reasons for or against some action. They can act only within the limits of their programming. So, it seems that, for Kant, we do not have the same duties toward artificially-intelligent agents as we do toward human agents. On the other hand, as AI become more and more advanced, the bounds of their reasoning abilities begin to escape us. This type of advanced machine learning has presented human technologists with what is now known as the “black box problem”: algorithms that have learned so much on “their own” (that is, without the direct aid of human programmers) that their code is too long and complex for humans to be able to read it. So, for some advanced AI, we cannot really say how they reason and make choices! A Kantian may, then, be inclined to argue that we should avoid saying cruel things to AI bots out of a sense of moral caution. Even if we find it unlikely that these bots are genuine agents whom we have duties toward, it is better to be safe than sorry.

But perhaps the most obvious argument against such behavior is one discussed in the article itself: “users who flex their darkest impulses on chatbots could have those worst behaviors reinforced, building unhealthy habits for relationships with actual humans.” This is a point that echoes the discussion of ethics of the ancient Greek philosopher Aristotle. In book 10 of his Nicomachean Ethics, he writes, “[T]o know what virtue is is not enough; we must endeavour to possess and to practice it, or in some other manner actually ourselves to become good.” Aristotle sees goodness and badness — for him, “virtue” and “vice” — as traits that are ingrained in us through practice. When we often act well, out of a knowledge that we are acting well, we will eventually form various virtues. On the other hand, when we frequently act badly, not attempting to be virtuous, we will quickly become “vicious.”

Consequentialists, on the other hand, will find themselves weighing some tricky questions about how to balance the predicted consequences of amusing oneself with robot abuse. While behavior that encourages or reinforces abusive tendencies is certainly a negative consequence of the app, as the article goes on to note, “being able to talk to or take one’s anger out on an unfeeling digital entity could be cathartic.” This catharsis could lead to a non-sentient chatbot taking the brunt of someone’s frustration, rather than their human partner, friend, or family member. Without the ability to vent their frustrations to AI chatbots, would-be users may choose to cultivate virtue in their human relationships — or they may exact cruelty on unsuspecting humans instead. Perhaps, then, allowing the chatbots to serve as potential punching bags is safer than betting on the self-control of the app users. Then again, one worries that users who would otherwise not be inclined toward cruelty may find themselves willing to experiment with controlling or demeaning behavior toward an agent that they believe they cannot harm.

How humans ought to engage with artificial intelligence is a new topic that we are just beginning to think seriously about. Do advanced AI have rights? Are they moral agents/moral patients? How will spending time engaging with AI affect the way we relate to other humans? Will these changes be good, or bad? Either way, as one Reddit user noted, ominously: “Some day the real AIs may dig up some of the… old histories and have opinions on how well we did.” An argument from self-preservation to avoid such virtual cruelty, at the very least.