There is a lot of debate surrounding the ethics of artificial intelligence (AI) writing software. Some people believe that using AI to write articles or create content is unethical because it takes away opportunities from human writers. Others believe that AI writing software can be used ethically as long as the content is disclosed as being written by an AI. At the end of the day, there is no easy answer to whether or not we should be using AI writing software. It depends on your personal ethical beliefs and values.

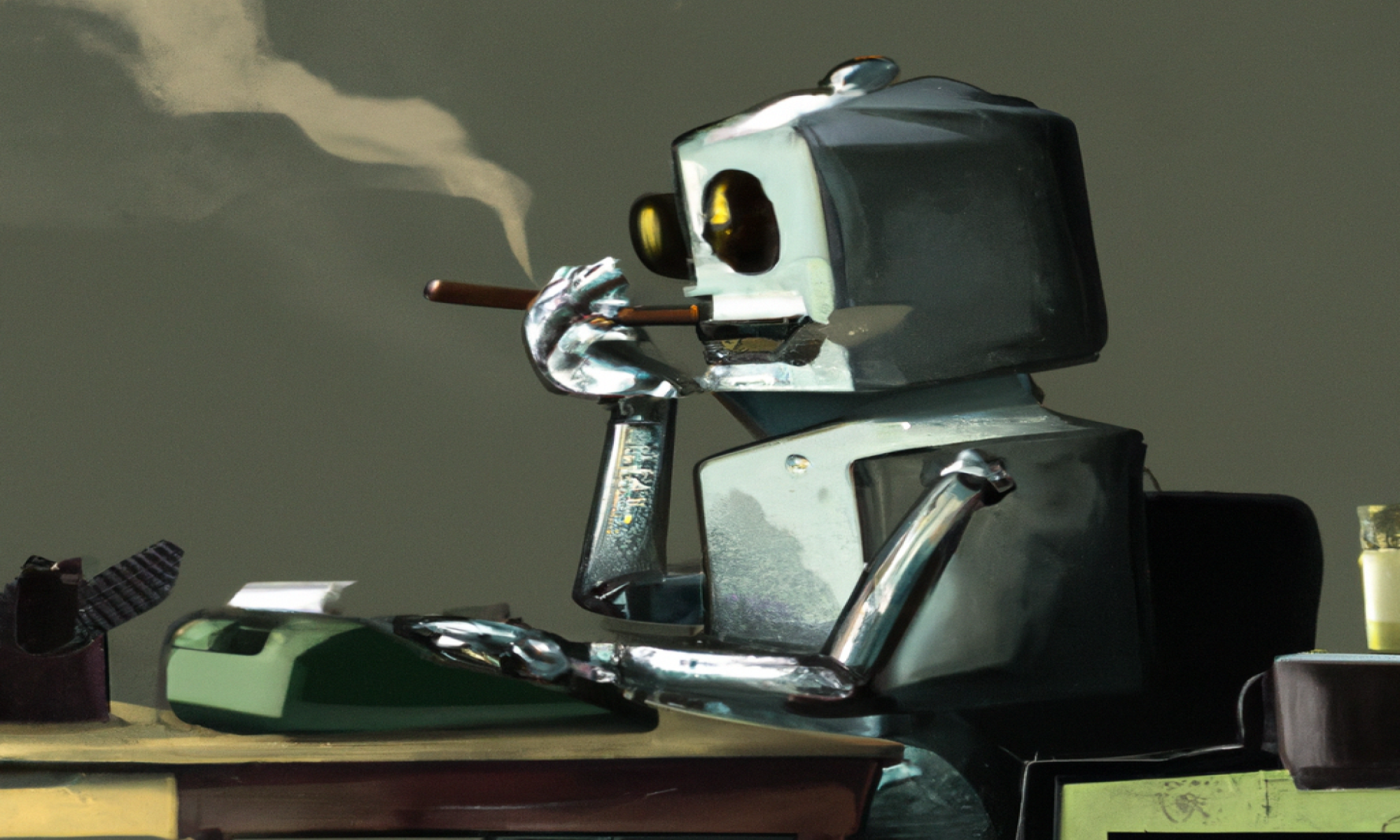

That paragraph wasn’t particularly compelling, and you probably didn’t learn much from reading it. That’s because it was written by an AI program: in this case, I used a site called Copymatic, although there are many other to choose from. Here’s how Copymatic describes its services:

Use AI to boost your traffic and save hours of work. Automatically write unique, engaging and high-quality copy or content: from long-form blog posts or landing pages to digital ads in seconds.

Through some clever programming, the website takes in prompts on the topic you want to write about (for this article, I started with “the ethics of AI writing software”), scours the web for pieces of information that match those prompts, and patches them together in a coherent way. It can’t produce new ideas, and, in general, the more work it has to do the less coherent the text becomes. But if you’re looking for content that sounds like a book report written by someone who only read the back cover, these kinds of programs could be for you.

AI writing services have received a lot of attention for their potential to automate something that has, thus far, eluded the grasp of computers: stringing words together in a way that is meaningful. And while the first paragraph is unlikely to win any awards for writing, we can imagine cases in which an automated process to produce writing like this could be useful, and we can easily imagine these programs getting better.

The AI program has identified an ethical issue, namely taking away jobs from human writers. But I don’t need a computer to do ethics for me. So instead, I’ll focus on a different negative consequence of AI writing, what I’ll call epistemic dilution.

Here’s the problem: there are a ridiculous number of a certain type of article online, with more being written by the minute. These articles are not written to be especially informative, but are instead created to direct traffic toward a website in order to generate ad revenue. Call them SEO-bait: articles that are written to be search-engine optimized so that they can end up on early pages of Google searches, at the expense of being informative, creative, or original.

Search engine optimization is, of course, nothing new. But SEO-bait articles dilute the online epistemic landscape.

While there’s good and useful information out there on the internet, the sheer quantity of articles written solely for getting the attention of search engines makes good information all the more difficult to find.

You’ve probably come across articles like these: they are typically written on popular topics that are frequently searched – like health, finances, automobiles, and tech – as well as other popular hobbies – like video games, cryptocurrencies, and marijuana (or so I’m told). You’ve also probably experienced the frustration of wading through a sea of practically identical articles when looking for answers to questions, especially if you are faced with a pressing problem.

These articles have become such a problem that Google has recently modified its search algorithm to make SEO-bait less prominent in search results. In a recent announcement, Google notes how many have “experienced the frustration of visiting a web page that seems like it has what we’re looking for, but doesn’t live up to our expectations,” and, in response, that they will launch a “helpful content update” to “tackle content that seems to have been primarily created for ranking well in search engines rather than to help or inform people.”

Of course, whenever one looks for information online, they need to sift out the useful information from the useless; that much is nothing new. Articles written by AI programs, however, will only make this problem worse. As the Copymatic copy says, this kind of content can be written in mere seconds.

Epistemic dilution is not only obnoxious in that it makes it harder to find relevant information, but it’s also potentially harmful. For instance, health information is a frequently searched topic online and is a particular target of SEO-bait. If someone needs health advice and is presented with uninformative articles, then one could easily end up accepting bad information pretty easily. Furthermore, the pure quantity of articles providing similar information may create a false sense of consensus: after all, if all the articles are saying the same thing, it may be interpreted as more likely to be true.

Given that AI writing does not create new content but merely reconstitutes dismantled bits of existing content also means that low-quality information could easily propagate: content from a popular article with false information could be targeted by AI writing software, which could then result in that information getting increased exposure by being presented in numerous articles online. While there may very well be useful applications for writing produced by AI programs, the internet’s endless appetite for content combined with incentives to produce disposable SEO-bait means that these kinds of programs way very well end up being more of a nuisance than anything else.