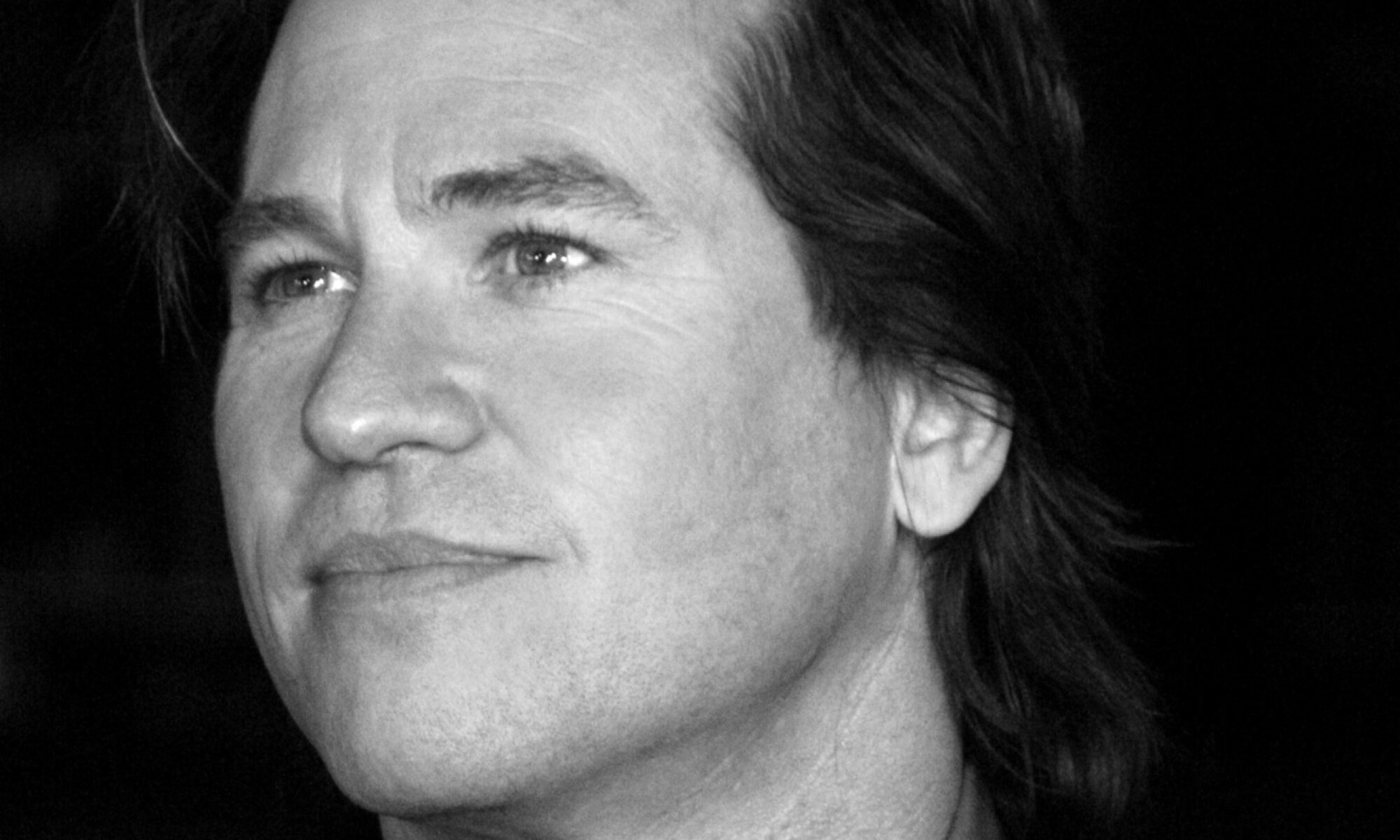

Last week, Variety shared an exclusive first look of Val Kilmer in the upcoming film As Deep as the Grave. This might not seem all that newsworthy, except for the fact that Kilmer passed away without filming a single scene in the movie. He will instead be appearing via the use of generative AI. It’s not the first time AI – or some other form of movie magic – has been used to bring actors back from the dead. And, as in those cases, the “resurrection” of Kilmer has been met with no small amount of controversy. It’s worth considering, then, what might be wrong with this practice. Specifically, might this AI-resurrection in some way harm Kilmer?

The idea that we might be able to someone after their death isn’t without precedent. Most of us think that we should respect someone’s funerary wishes – that it would be wrong to cremate someone who explicitly requested to be buried. Likewise, there are taboos around speaking ill of the dead and wantonly destroying the things they created. Granted, the wrongness of these actions is sometimes grounded in the harms that will be caused to other (still living) people. We might, for example, refrain from maligning a deceased uncle so as not to upset his young children. But there are plenty of cases in which the wrongness of an action seems grounded exclusively in the idea that it will harm the deceased.

This is what we refer to as “posthumous harm.”

Despite the intuitive examples above, it’s notoriously difficult to pin down the basis of posthumous harm. This is made even more difficult if we accept something like the Termination Thesis – that is, the claim that when we die, we simply cease to exist. Hedonism, for example, claims that something can only be bad for us if it causes us pain. But if death is the end of our existence, then we can no longer feel pain – and so nothing that happens posthumously can harm us. But Hedonism isn’t the only way to assess what’s good and bad for a person. A commonly cited alternative is Desire Theory. This view says that something is bad for us if it frustrates our desires. It’s an attractive approach, since it allows us to explain the badness of things that might never cause us pain. Suppose, for example, that several of my students make fun of me behind my back – yet manage to ensure I never find out. Hedonism would say that this isn’t bad for me. Why? Because it never causes me pain. Desire Theory, on the other hand, would provide the more intuitively correct answer that my students words are bad for me. Why? Because I desire to be respected by my students. Their behavior means that this desire is frustrated – which is bad for me, even if I never find out.

What’s important for our purposes is that it seems our desires can be frustrated after we’re dead. If I desire to be cremated, then burying my corpse frustrates that desire. In this way, the Desire Theorist can explain how events that happen after my death can nevertheless harm me.

Perhaps, then, Desire Theory can explain the basis of our concern with AI-resurrection. Namely: this practice will harm a deceased actor where using their likeness will violate certain desires that they held while alive. But that’s what makes Kilmer’s case so interesting: he desired to appear in the film. Kilmer was cast in the role five years prior to his death in 2025 – but was, due to his worsening medical condition, unable to complete any filming. As Coerte Vorhees – writer and director of the film – tells it:

“[Kilmer’s] family kept saying how important they thought the movie was and that Val really wanted to be a part of this. He really thought it was [an] important story that he wanted his name on. It was that support that gave me the confidence to say, okay let’s do this. Despite the fact some people might call it controversial, this is what Val wanted.”

Indeed, Kilmer had form for supporting this kind of technology, assisting in the creation of an AI-powered speaking voice to reprise his role as Tom “Iceman” Kazansky in 2022’s Top Gun: Maverick. As Kilmer’s daughter notes:

“He always looked at emerging technologies with optimism as a tool to expand the possibilities of storytelling. This spirit is something that we are all honoring within this specific film, of which he was an integral part.”

This might seem like the end of the story. By all accounts, Kilmer would have desired to have his likeness used in this way – so engaging in AI-resurrection does not harm him posthumously. If, on the other hand, an actor does express a desire to the contrary, this may provide a basis for saying that AI-resurrection of that actor would harm them posthumously.

But there’s one final complication – and it’s found in general concern about what kinds of desires are actually relevant to our wellbeing in the first place. In a standard case, if a Desire Theorist wants to know if X is good or bad for someone, they simply have to ask “does this person now desire X?” If they do, X is good for them. If they don’t, X is bad for them. But things get tricky when we start talking about desires that depend on something happening in the future. Long ago, I desired (and in fact, trained) to be a lawyer. But now I’m a philosopher. Does this mean I’m harmed by my current career? Clearly not.

We might avoid this problem by focusing exclusively on my most recent desire (which, incidentally, is to be a philosopher). But that move will create all kinds of additional problems – especially in the case of desires about events after we die. Suppose I spend my entire life desiring to be cremated, but develop a sudden desire to be buried in the final five minutes of my life. Would burial harm me or not? In order to answer this, we have to establish which of my desires should be given precedence. Is it my most recent – but short-loved – desire to be buried? Or is it my less-recent – but long-lived – desire to be cremated? Suppose, to complicate matters further, I vacillated constantly between desires – changing my mind every day. I then just so happen to die on a day when I desire burial – even though I desired cremation the day before, and would return to desiring cremation the very next day.

These complications have caused some authors to insist that Desire Theory should only pay attention to future-independent desires – that is, desires that occur simultaneously with the occurrence (or non-occurrence) of what is being desired. But such a limitation automatically preludes posthumous harm. Why? Because (assuming the Termination Thesis is true) we cannot have desires after we die. If that’s the case, then neither Hedonism nor Desire Theory can explain the wrongness of AI-resurrection. If we still want to object to this practice, we’ll need to look elsewhere for the reason.